The authors indicate: The purpose of this is to bias the representation towards the actual observed word.

For example, I don't understand the difference between. So masking with some random word would teach the model to actually consider the mirror words, but since the percentage is very small (1.5%), it would not confuse the model so much, so this might be beneficial. That is, it does not consider only the left and right part of the sentence, but also the word itself. Our Random Pictionary Word generator is super useful if you don’t happen to have the cards lying around. My understanding is that this way the model learns to be influenced from the word it is trying to predict. Thinking of new words to draw all the time can be difficult and annoying, so if you’re looking for random Pictionary words to play with, this is the perfect place. 80% are replaced with token (which makes perfect sense, just teach the model to learn some words given the left and right context).Syntax : random.random () Parameters : This method does not accept any parameter. It takes no parameters and returns values uniformly distributed between 0 and 1. (See the opening and closing brackets, it means including 0 but excluding 1). The masked words are distributed as follows random.random () function generates random floating numbers in the range 0.1, 1.0). Random Adverb Generator: Randomly generate adverbs, you can specify the number of adverb generated, the length of adverb and so on.I have noticed that (from the original BERT paper) in the MLM training procedure, the authors decide to mask 15% of the words in a sentence.

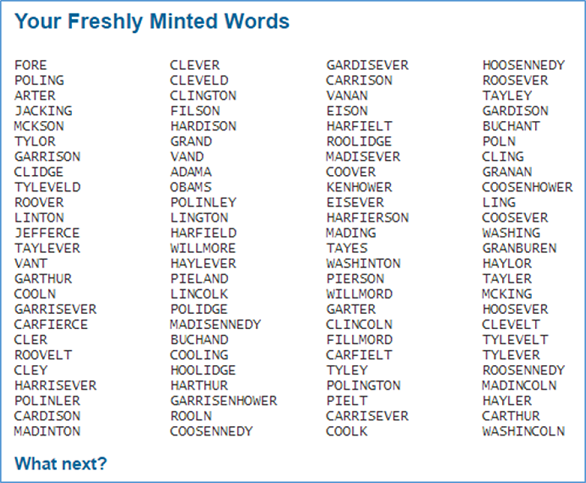

Random word brainstorming allows your team to solve business problems, create new inventions, improve existing ideas, or just. It is a simple, open-ended approach that can be used for individual or group brainstorming sessions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed